Data Science- Design of Experiments Interview Questions

Category: Data Science Posted:Mar 29, 2019 By: Ashley Morrison

1. Google wants to add a new button to their main search page. How can they determine whether or not people enjoy this new button feature?

Answer: This type of question is common, essentially just testing to see if you understand the concept of an A/B test. First, we decide on metrics to compare across the dual version of the site we will serve.

Common metrics are Daily Active Users (DAU), Monthly Active Users (MAU), Click Through Rate (CTR), Impressions, Engagement, etc. So, you may want to think about what the actual situation is and then decide on some metrics. So, it may be good to choose the basic metrics such as DAU i.e. daily active users, and MAU i.e. Monthly Active users.

- The second step then is to prepare two or more versions of your site or page.

- One version without the feature change, another version with the new feature change. You will then serve the different versions of the page to the populations.

- You need to randomly split the population, being careful not to introduce biases when randomly splitting. Once this set-up is done you can design to define your statistical hypothesis test.

- Create a null hypothesis for your hypothesis testing, for example, the metric chosen will be the same for both groups.

- For the hypothesis test, you will pre-define your acceptable Type I error (FPR or alpha)

- Using this you can use the p-value of your test to decide whether the results are significant. We will also want to assess the power of the statistical test.

- This is 1-Beta, where Beta is the Type II error. You will also want to consider factors such as how long runs the experiment. You will also need to decide on a protocol for outlier such as truncation.

Want to know more about Data Science? Click here

2. How can you know if a sample is biased? What different kinds of biases should you be aware of when using a sample?

Answer: If you know the true mean of your population from which you sampled you can take samples of your sample multiple times and check if the mean of these samples is normally distributed around the true mean of the population. This is known as a form of bootstrapping. Have a look at different types of biases:

a) Selection Bias:

- By some error, you have excluded a specific part of the population.

- Sampling for the average weight of the USA by only sampling one state.

b) Measurement Bias: The method of measurement creates observations that are different than the true value coming in. This actually happens let’s say you’re sampling from a stream of information and your sampling rate is lower than the rate of change in the stream. You have some sort of device and you’re taking information from it. It’s streaming. And let’s say every half second it jumps up to some high value and then after that half-second, it jumps down to the average value. So basically has this frequency of jumping up and down every half second. But for some reason, your sampling rate may be lower so maybe you’re taking a sample every second. So you’re sampling at a lower frequency you’re never going to see those high peaks which means you have a measurement bias because your actual measurement is not what’s true to the actual stream of information.

Click here to learn A Beginner’s Guide To Data Science

3. Facebook is testing different designs of the user homepage. They have come up with 50 variations. They would like to test all of them and choose the best. How would you set up this experiment? What metrics would you calculate and how would you report the results?

Answer: This is slightly different than a straight A/B test because we are dealing with more than just 2 variations. There are multiple statistical techniques that exist for this sort of problem, let’s discuss some of them.

T-test among Pairs of Treatment:

- This is similar to an A/B test except we randomly assign all 50 versions to users.

- There are a few things to consider with this approach however we are doing a T-test among multiple pairs of treatments not just a single pair for one thing when you’re doing this T-test among pairs of treatment.

- With so many versions you will need to make sure you have enough users to get a statistically significant result (this usually is not an issue for Facebook).

- With multiple hypothesis tests you increase the likelihood of a rare event occurring, meaning you need to adjust your alpha value you use to compare your p-value against for significance accordingly.

- Since this is a multiple inference problem you can correct your alpha value using the Bonferroni correction method. Typically we set alpha to 0.05, but with the Bonferroni correction we divide this by the number of tests.

- In this case it’s 0.05/50 = 0.001. This requires a large population of testing.

So now that becomes the alpha value that we’re going to test or P-value against and clearly we’re going to need a much larger population for testing. Otherwise we’re just never going to achieve statistical significance for anything with such a small Alpha value.

4. What is the definition of power for a statistical test? What factors affect the power and how does the power relate to the p-value?

Answer:

- The power of any test of statistical significance is defined as the probability that it will reject a false null hypothesis.

- So, statistical power is inversely related to beta or the probability of making a type II error. In short power = 1- β.

- Statistical power is the likelihood that a study will detect an effect when there is an effect there to be detected. If statistical power is high, the probability of making a Type II error, or concluding there is no effect when, in fact, there is one, goes down.

- Statistical power is affected chiefly by the size of the effect and the size of the sample being used to detect it.

- Bigger effects are easier to the tech than smaller effects while large samples offer greater test sensitivity than small samples. If you perform a test the P-value is the smallest Alpha for which the tests will reject the null It is of value the significance level for which the tests are autistic would have been on the boundary of the rejection region.

- By comparing the p-value with Type I error we can decide if we are going to reject the null hypothesis.

- If we set a larger α we then in turn allow for larger p-values to be considered significant, meaning we increase the power of the test.

More Questions on Design of Experiments:

5. Point out the correct statement:

a) If equations are known but the parameters are not, they may be inferred with data analysis

b) If equations are not known but the parameters are, they may be inferred with data analysis

c) If equations and parameter are not, they may be inferred with data analysis

d) None of the Mentioned

Answer: a) Usually the random component of data is measurement error.

6. Point out the wrong statement:

a) Randomized studies are not used to identify causation

b) Complication approached exist for inferring causation

c) Causal relationships may not apply to every individual

d) All of the Mentioned

Answer: a) Randomized studies are usually used to identify causation.

Learn the five reasons to learn Data Analytics

7. Which of the following is a good way of performing experiments in data science?

a) Measure variability

b) Generalize the problem

c) Have Replication

d) All of the Mentioned

Answer: d) Experiments on causal relationships investigate the effect of one or more variables on one or more outcome variables.

8. Which of the following data mining technique is used to uncover patterns in data?

a) Data bagging

b) Data booting

c) Data merging

d) Data Dredging

Answer: d) Data dredging, also called as data snooping, refers to the practice of misusing data mining techniques to show misleading scientific ‘research’.

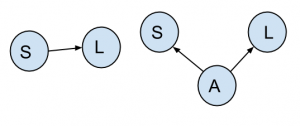

9. Which of the following design term is perfectly applicable to the below figure?

a) Correlation

b) Confounding

c) Causation

d) None of the mentioned

Answer: b)

Confounding can be dealt with either at the study design stage, or at the analysis stage.

Learn Data Science from Industry Experts

10. Which of the following approach should be used if you can’t fix the variable?

a) randomize it

b) non stratify it

c) generalize it

d) none of the mentioned

Answer: a) If you can’t fix the variable, stratify it.

99999999 (Toll Free)

99999999 (Toll Free)  +91 9999999

+91 9999999