Why Big Data will take the World by Storm in 2018

Category: Hadoop Posted:Dec 18, 2017 By: Serena Josh

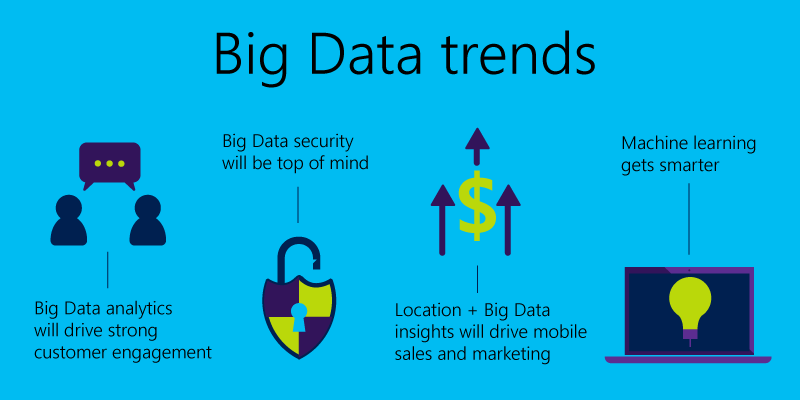

Big Data is the next big thing in tech world as of today with enterprises around world rushing to invest in various technologies that deal with Big Data. This means, 2018 holds a lot of promise in terms of emerging trends in this amazing new domain. Given below are a few such groundbreaking trends that will emerge.

1. Spark and Machine Learning light up Big Data

Apache Spark was once known to be a well-known component of the Hadoop ecosystem and is currently taking on the role of a Big Data platform for many Businesses.

Data Architects, BI Analysts, and IT Managers were part of a survey which came up with the finding that almost 70 percent of the participants preferred Spark over the alternative of MapReduce. MapReduce is batch-oriented and does not gel with interactive applications, or even real-time stream processing.

Computation intensive Machine Learning, Graph Algorithms, and AI are all being used to better harness Big Data potential in critical areas such as Healthcare. Microsoft Azure Machine Learning is user-friendly and comes with easy integration with pre-existing Microsoft platforms. This is part of an effort to open up Machine Learning to the masses which will mean the creation of models and applications which will generate numerous petabytes of data. Smart systems and the aid of Machine Learning means the market will focus on self-service software providers to transform the data into a form that is consumable by the end user.

2. Convergence of IoT, Cloud, and Big Data will create new opportunities for Self-Service Analytics

It is predicted that everything in the coming few months will come equipped with a sensor which will eventually feed data lakes, in turn joining the global pool of universal data. This is the main concept behind the use of IoT and the cloud, with IoT generating enormous volumes of structured and unstructured data and a large portion of such data being used by Cloud services. The data referred to here is not homogenous and exists in various systems, both relational and non-relational. This can range from NoSQL databases to Hadoop clusters. The way data is stored and managed has been enhanced with a great many innovations speeding the process used to capture the data. The accessing and comprehension of data itself is a bit of a challenge currently with a lot of people working round the clock to make even a little headway. This is why the demand for analytical tools is growing, especially for ones that can seamlessly connect to and integrate a large variety of cloud hosted data services. Such tools will help businesses in taping into all hidden opportunities of IoT investments.

3. Self-service data preparation will become mainstream as end users begin to shape Big Data

Hadoop data should be easily accessible for business users and poses a considerable challenge to enterprises worldwide. Where, Self-Service Analytics platforms play a crucial role in facing the arisen challenge. Now, Business users are mainly focusing on minimizing the time and complexity involved in data preparation involved in Data Analysis. This is even more significant when handling a variety of data formats and types.

Hadoop data can be prepared right at the source using agile self-service data prep tools along with making the data available for snapshots for accelerated exploration which is done in a much easier way. Enterprises in this space have stressed on end-user data preparation for Big Data such as Alteryx, Trifacta, and Paxata. This means late Hadoop adopters have a lot to gain from this, with the entry barriers being reduced significantly.

You may also like to read Top 5 Big Data Trends 2017

4. Hadoop will add to enterprise standards

One of the most important trends of Big Data is Hadoop becoming a core part of the enterprise IT landscape. The year 2018 will see a greater volume of investments in governance and security aspects dealing with enterprise systems. A good example for this is Apache Sentry which offers a system for the enforcing of highly granular and role-based authorization for data and metadata stored on a Hadoop cluster. Apache Atlas, created as part of the data governance initiative, enables organizations to apply consistent data classification throughout the data ecosystem and Apache Ranger offers centralized security administration for Hadoop.

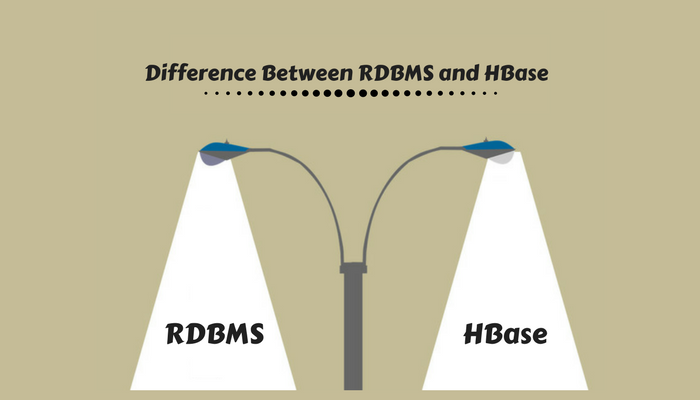

Users are beginning to expect various kinds of capabilities from enterprise level RDBMS platforms. Such capabilities have been brought to the focus with regard to the latest Big Data tech, which removes a major barrier to enterprise adoption.

Register here for Live Webinar on HADOOP

5. Rise of metadata catalogs will help people find analysis worthy Big Data

Enterprises have long time to dispose of or eliminate data just because there was too much of it to actually process.

Metadata catalogs can help users in discovering and understanding relevant data worth analyzing utilizing self-service tools. This missing link in customer need is being filled by enterprises such as Informatica, Alation, and Waterline which utilize Machine Learning for the automation of the work of locating data in Hadoop. The catalog files utilizing tags, revealing relationships between various data assets, and even offer query suggestions through searchable UIs. This assists both data consumers and data stewards minimize the time taken to trust, locate, and precisely check the data. In the coming year 2018, we’ll see more awareness and demand for Self-Service discovery. This, in turn, will grow as a natural arm of Self-Service Analytics.

Go through our Top 20 Hadoop Developer Interview Questions to crack your next interview.

Conclusion

Big Data trends are not just a new technology, but the only way to develop AI in a practical timeframe. Once AI is achieved, everything in IT will speed up in IT exponentially, giving birth to new technologies not even thought of yet! Learn the latest tools, techniques, and concepts with hands-on experience in projects and labs. For more information please go through our website.

Do check out the tutorial for Beginners:

99999999 (Toll Free)

99999999 (Toll Free)  +91 9999999

+91 9999999